[ad_1]

Extra and additional products and products and services are getting advantage of the modeling and prediction capabilities of AI. This article offers the nvidia-docker software for integrating AI (Artificial Intelligence) software package bricks into a microservice architecture. The principal edge explored below is the use of the host system’s GPU (Graphical Processing Unit) sources to accelerate various containerized AI purposes.

To have an understanding of the usefulness of nvidia-docker, we will get started by describing what kind of AI can reward from GPU acceleration. Secondly we will current how to carry out the nvidia-docker tool. Finally, we will explain what instruments are accessible to use GPU acceleration in your purposes and how to use them.

Why utilizing GPUs in AI purposes?

In the discipline of artificial intelligence, we have two primary subfields that are utilised: equipment learning and deep discovering. The latter is portion of a much larger family of machine learning solutions based mostly on synthetic neural networks.

In the context of deep understanding, in which functions are basically matrix multiplications, GPUs are a lot more successful than CPUs (Central Processing Models). This is why the use of GPUs has developed in new yrs. Certainly, GPUs are viewed as as the coronary heart of deep discovering for the reason that of their massively parallel architecture.

On the other hand, GPUs are not able to execute just any method. Without a doubt, they use a distinct language (CUDA for NVIDIA) to just take edge of their architecture. So, how to use and converse with GPUs from your apps?

The NVIDIA CUDA technological know-how

NVIDIA CUDA (Compute Unified Unit Architecture) is a parallel computing architecture mixed with an API for programming GPUs. CUDA interprets application code into an instruction established that GPUs can execute.

A CUDA SDK and libraries this sort of as cuBLAS (Simple Linear Algebra Subroutines) and cuDNN (Deep Neural Network) have been created to talk easily and effectively with a GPU. CUDA is offered in C, C++ and Fortran. There are wrappers for other languages including Java, Python and R. For illustration, deep mastering libraries like TensorFlow and Keras are primarily based on these systems.

Why employing nvidia-docker?

Nvidia-docker addresses the needs of developers who want to increase AI features to their apps, containerize them and deploy them on servers run by NVIDIA GPUs.

The aim is to established up an architecture that lets the progress and deployment of deep learning models in services out there by using an API. Consequently, the utilization price of GPU resources is optimized by making them available to several application situations.

In addition, we benefit from the strengths of containerized environments:

- Isolation of scenarios of each individual AI design.

- Colocation of various types with their specific dependencies.

- Colocation of the very same design under various versions.

- Dependable deployment of models.

- Design overall performance monitoring.

Natively, employing a GPU in a container demands installing CUDA in the container and giving privileges to obtain the product. With this in intellect, the nvidia-docker device has been formulated, letting NVIDIA GPU devices to be exposed in containers in an isolated and protected manner.

At the time of crafting this short article, the newest version of nvidia-docker is v2. This version differs tremendously from v1 in the pursuing strategies:

- Variation 1: Nvidia-docker is implemented as an overlay to Docker. That is, to create the container you had to use nvidia-docker (Ex:

nvidia-docker operate ...) which performs the actions (among the other individuals the development of volumes) enabling to see the GPU gadgets in the container. - Model 2: The deployment is simplified with the substitute of Docker volumes by the use of Docker runtimes. In truth, to launch a container, it is now essential to use the NVIDIA runtime via Docker (Ex:

docker run --runtime nvidia ...)

Note that thanks to their unique architecture, the two variations are not appropriate. An software composed in v1 need to be rewritten for v2.

Environment up nvidia-docker

The demanded elements to use nvidia-docker are:

- A container runtime.

- An offered GPU.

- The NVIDIA Container Toolkit (primary portion of nvidia-docker).

Prerequisites

Docker

A container runtime is necessary to run the NVIDIA Container Toolkit. Docker is the recommended runtime, but Podman and containerd are also supported.

The official documentation offers the installation process of Docker.

Driver NVIDIA

Drivers are essential to use a GPU system. In the case of NVIDIA GPUs, the motorists corresponding to a given OS can be obtained from the NVIDIA driver obtain web page, by filling in the information and facts on the GPU model.

The set up of the drivers is accomplished by using the executable. For Linux, use the following instructions by replacing the name of the downloaded file:

chmod +x NVIDIA-Linux-x86_64-470.94.run

./NVIDIA-Linux-x86_64-470.94.operateReboot the host machine at the close of the installation to acquire into account the mounted drivers.

Installing nvidia-docker

Nvidia-docker is available on the GitHub undertaking web page. To set up it, observe the installation manual based on your server and architecture details.

We now have an infrastructure that will allow us to have isolated environments offering entry to GPU means. To use GPU acceleration in programs, several applications have been developed by NVIDIA (non-exhaustive checklist):

- CUDA Toolkit: a set of tools for creating software/courses that can perform computations making use of each CPU, RAM, and GPU. It can be utilized on x86, Arm and Power platforms.

- NVIDIA cuDNN](https://developer.nvidia.com/cudnn): a library of primitives to speed up deep learning networks and improve GPU efficiency for major frameworks these types of as Tensorflow and Keras.

- NVIDIA cuBLAS: a library of GPU accelerated linear algebra subroutines.

By applying these equipment in application code, AI and linear algebra responsibilities are accelerated. With the GPUs now seen, the software is in a position to ship the facts and functions to be processed on the GPU.

The CUDA Toolkit is the least expensive degree option. It delivers the most handle (memory and instructions) to build personalized apps. Libraries present an abstraction of CUDA features. They permit you to focus on the software enhancement fairly than the CUDA implementation.

Once all these aspects are applied, the architecture employing the nvidia-docker services is all set to use.

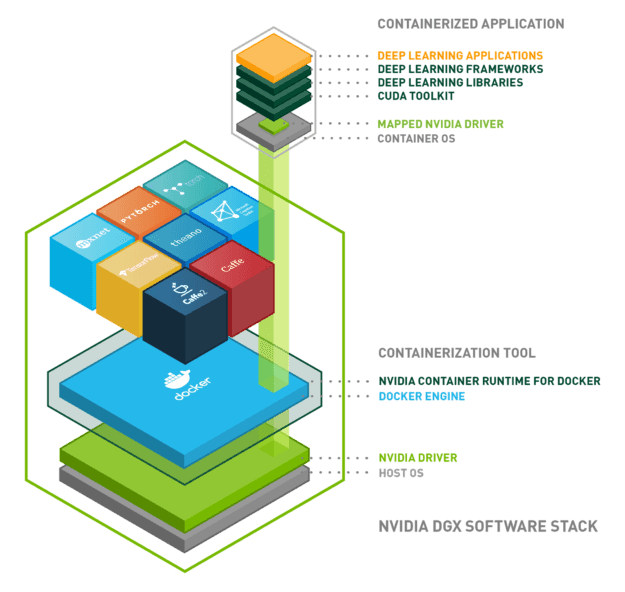

Listed here is a diagram to summarize everything we have viewed:

Summary

We have established up an architecture allowing the use of GPU sources from our programs in isolated environments. To summarize, the architecture is composed of the following bricks:

- Operating technique: Linux, Home windows …

- Docker: isolation of the natural environment making use of Linux containers

- NVIDIA driver: installation of the driver for the components in query

- NVIDIA container runtime: orchestration of the previous a few

- Purposes on Docker container:

- CUDA

- cuDNN

- cuBLAS

- Tensorflow/Keras

NVIDIA proceeds to acquire resources and libraries about AI technologies, with the goal of setting up by itself as a leader. Other technologies could complement nvidia-docker or may be more appropriate than nvidia-docker relying on the use case.

[ad_2]

Supply connection